Just for fun I decided to write a tool for work in Python instead of Perl, and I thought I’d describe the process. Partly because other people can be very opaque about how they learn things, and especially how they learn technical things or approach something unfamiliar.

At work, I had a big list of URLs to look through, to check a particular detail of sidebar widget code on each blog.

Originally, I got kind of excited as I browsed this page of twill commands. I installed twill on my laptop and tried out the examples. Wooo! That was so easy! It was like screen scraping with pseudocode. So, without really looking into it any further I figured it would be easy to churn through the few thousand URLs I needed to check. It looked like maybe a day of work, or two if I was floundering around, which is often likely when learning something new.

When I actually sat down to do it, I realized that twill didn’t have any control flow commands. So there wasn’t a way within twill, fabulous as it seemed, to tell it to go through a list of variables. So I started to try to write it in Python. I went to a few of Seth‘s informal Python lessons months ago and then paired with Danny a little bit. We wrote a thingie with Django to let people create random Culture names. (Result: I am the Human Sol-Terran Elizabeth Badgerina Karen da’Champions-West. I am currently travelling on a Ship, the ROU Knock Knock, Psychopath Class.) In other words, as of Monday, I could write Hello World in Python, but only if I look at the manual first.

So I wrote pseudocode first, like this:read in biglist.txt

for each line in biglist.txt

split the line into $blogurl, $blogemail

get that url's http dump

if it has "OLDCODE" in it

Write $blogurl, $blogemail to hasoldcode.logfile

if not

if it has "NEWCODE" in it

write $blogurl, $blogemail to hasnewcode.logfile

Pseudocode rocks.

The Python docs made me want to chew my laptop in half. I read them anyway, mostly the string functions but that barely helped. Instead I just brute force googled things like “how do i find a substring in a string with python” which was often helpful if only so that I felt less lonely. It took me a while just to figure out how to concatenate two strings. I kept trying to use a dot, which didn’t work!

The most helpful thing was turning up bits of other people’s code, the simpler the better, with brief explanations.

The next most helpful thing was cheating by asking Danny, who kindly just said “Oh, do this, import urllib and do f=urllib2.urlopen(blog) and then h= ”.join(f.readlines()) and print h.” Testing things out in the interpreter was useful too. The point isn’t whether you lift it out of a class, a book, someone else’s code, or another person. Just start out with a few lines of something that works. Then, fiddle with it and master it.

But there was no way I could figure out from scratch what to do with the first giant horrible alpha-vomit error that popped up. Danny kindly IM-ed me “try: THINGIE except ValueError:” which I then googled and figured out how to use. Some googling of bits of the error message would have gotten me some useful examples. Here, I may have chickened out and asked for help too soon.

I put my pseudocode into a file as comments. I wrote a file of a few test urls and emails. Then I wrote the program kind of half-assedly a couple of times, piece by piece, only trying to do one thing at a time. It mostly worked. That was the end of Day 1.

In the morning life was much better. Then I rewrote my pseudocode to include all the things I’d forgotten. I threw everything out and started over. Suddenly I felt like I knew what I was doing. A couple of hours later it all worked great.

While I was in that state of knowing-what-I-was-doing and being able to see it all clearly, it was exactly like the point in writing a poem where I know the map ahead. It is knowing the map and how to navigate and having not just the destination but having built a mental image of the entire trip. So, as with going down a road where I’ve never been before, but have imagined out the map, I feel a sense of the entire poem, all at once. It’s a holographic feeling. In that state of mind, I am very happy, and want to work without stopping.

It’s funny to talk about such a simple bit of programming that way but that’s how it felt. I also knew where I was doing something in an inelegant way, but that it was okay and I’d fix it later.

When it was all working I paused for a bit, then went back and fixed the inelegant bit.

After that, I put in some status messages so I could watch them scroll past with every URL. (A good idea since the error checking is not very thorough.)

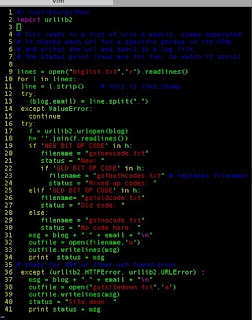

Here is the code. I can see more ways to improve it and make it more general. It would be nicer to just use the filename as the scrolling status message, perhaps giving the files names that would look better as they scroll past. There are also stylistic questions like, I know many people would combine

outfile = open(“gotsitedown.txt”,’a’)

outfile.writelines(msg)

into one line, but I couldn’t read that again and understand what it meant a month from now, so I tend not to write code that way. Maybe once I’m better at it.

#! /usr/bin/python

import urllib2

# This reads in a list of urls & emails, comma separated.

# It checks each url for a specific phrase in its HTML

# and writes the url and email to a log file.

# The status print lines are for fun, to watch it scroll.

lines = open("biglist.txt",'r').readlines()

for l in lines:

line = l.strip()

try:

(blog,email) = line.split(",")

except ValueError:

continue

try:

f = urllib2.urlopen(blog)

h= ''.join(f.readlines())

if 'NEW BIT OF CODE' in h:

filename = "gotnewcode.txt"

status = "New! "

if 'OLD BIT OF CODE' in h:

filename = "gotbothcodes.txt" # replaces filename!

status = "Mixed up codes: "

elif 'OLD BIT OF CODE' in h:

filename= "gotoldcode.txt"

status = "Old code: "

else:

filename = "gotnocode.txt"

status = "No code here: "

msg = blog + "," + email + "\n"

outfile = open(filename,'a')

outfile.writelines(msg)

print status + msg

# check for 404 or other not found error

except (urllib2.HTTPError, urllib2.URLError) :

msg = blog + "," + email + "\n"

outfile = open("gotsitedown.txt",'a')

outfile.writelines(msg)

status = "Site down: "

print status + msg

My co-worker Julie was sitting across the table from me doing something maddeningly intricate with Drupal and at the end of the day she agreed with me that it is best to code something the wrong way at least twice in order to understand what you’re doing. “If I haven’t done it wrong three times, something is wrong.”

I wish now that I had written down all the wrong ways, or saved the wrong code, to compare how it improved. One wrong way went like this: instead of writing the 5 different logfiles of blogs with new code, old code, no code, site down, and mixed old and new code, I thought of making directories and writing all the url names to the directory. That was before I thought of putting the emails in a csv file with the urls. Why did it make sense at first? Who knows! It might be a good rule, though. The first think you think of is probably wrong. You can’t see a more optimal way until you have walked around in the labyrinth down some possible yet wrong ways.

This WordPress Code Highlighter Plugin might be the inspiration that pushes me finally off of Blogspot and onto WordPress for this blog, so that I can do this super nicely in text and not in an image.

I wouldn’t read the Python docs to learn Python for the first time ever. I would read a Python tutorial. Dive into Python is probably the best choice since you already know programming.

I recently found a nice script which will html-ize Python code with syntax highlighting: http://code.activestate.com/recipes/52298/

One thing I do a lot now with try/except blocks, is something like this:

try:

_dothings(blah)

except SomeError:

_print blah

_raise SomeError

(where SomeError is ValueError or whatever caused me to notice there was a problem… I also like to raise my own custom errors, like “raise WhyDoesntThisListHaveEnoughItemsError” :))

I wouldn’t combine the outfile open and write lines. Instead I would move the outfile open line out of the loop. (And probably also close the file after the loop…: “outfile.close()”)

but anyway, yay, Python 🙂

And now that you’ve gone through all that, you can check out Beautiful Soup for all your screen scraping goodness.

I made some ~improvements to the code (and someone submitted your post to reddit, which is how I came across it):

http://www.reddit.com/comments/6x4fv/learning_python_by_writing_a_screen_scraper/c053vdu

The file object returned by open is iterable. This saves having to creat e a list with readlines()

fp = open(‘file.txt’)

for line if fp:

…